AI for Human Propaganda

At a media forum over the weekend in Zhengzhou, the capital of Henan province, foreign influencers took the stage to explain how their lives in China had offered a more “authentic” understanding of the country. Indian travel blogger Anayat Ali and Belgian influencer Lucas Deckers said that immersion in local life had given them a more realistic portrait of China than headlines alone could convey — the subtext being that Western media coverage of the country is inherently biased and deeply unfair. Also present was Adam Foster, head of the US-based Helen Foster Snow Foundation, whose mission is to preserve the legacy of Edgar Snow, the journalist and author of Red Star Over China, who today remains for China’s leadership the paragon of the useful foreign journalist.

In a twist that would almost certainly perplex professional journalists elsewhere in the world, this talk about authenticity in portrayals of China unfolded at a forum uncritically proclaiming the virtues of artificial intelligence for media production. Understand the context, however, and this alliance of AI and untruth makes perfect sense, throwing into sharp relief AI’s emerging role in both domestic media control and global propaganda and disinformation.

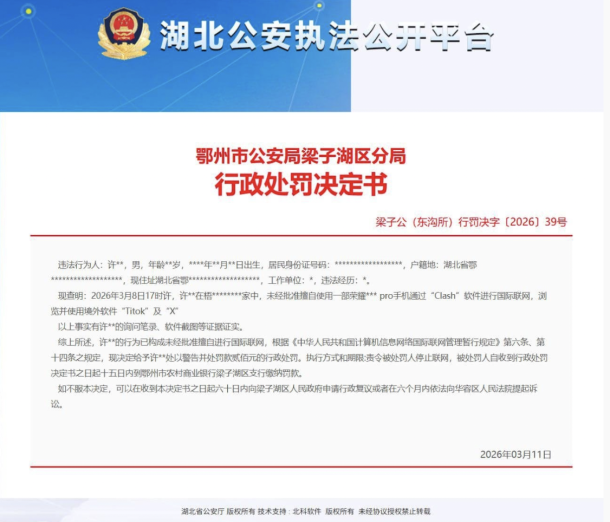

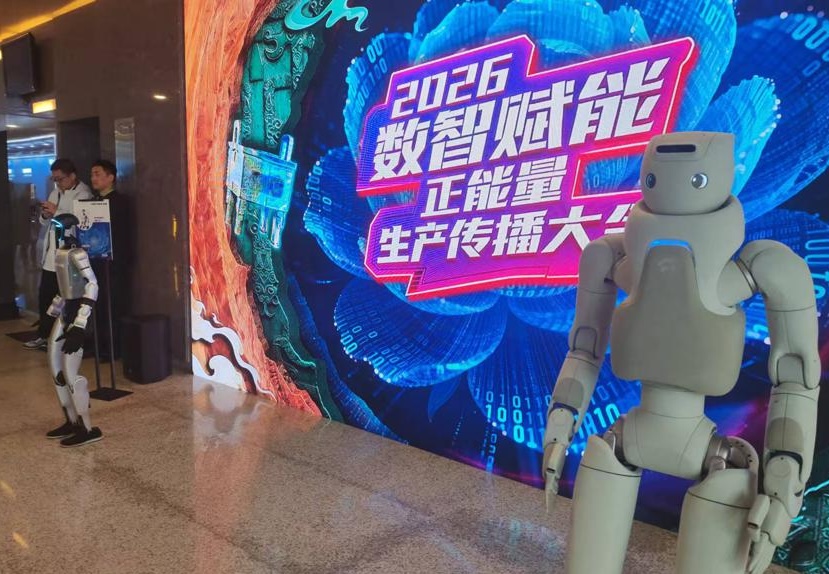

The theme of this year’s Internet Media Forum, co-organized by the Cyberspace Administration of China (CAC) and held in Henan province on March 28–29, was the “2026 Digital Intelligence Empowerment: Positive Energy Production and Dissemination Conference.” That is a mouthful, but the concept is simple enough: AI has the power to revolutionize the Chinese Communist Party’s demand that journalism and media serve its interests by emphasizing positives and suppressing critical coverage.

Across much of the world, the rise of artificial intelligence has prompted fierce and often anguished debate about the future of the media, and the role of the journalist. When is AI a “strategic ally” for the truth-seeking journalist? How can we balance the valuable aspects of AI with its myriad dangers? How can we make sure that substantive, relevant and even hard-hitting journalism — so critical for democracy — can be discovered amid the inundation of synthetic text, image and video?

In a recent report adding to a rising tide of output on the subject, the Center for News, Technology and Innovation (CNTI) weighed in earlier this month. “For newsrooms, the use of generative AI tools offers benefits for productivity and innovation,” the report said coolly. “At the same time, it risks inaccuracies, ethical issues and undermining public trust.” At a recent press talk in Vietnam on AI and the “crisis of trust” in news facing media, hosted by the Embassies of Canada, Norway, New Zealand, and Switzerland in collaboration with the United Nations Development Program, UNDP representative Ramla Khalidi returned to basics: “The most important thing in journalism, for me, is trust. When trust is lost, you are no longer a voice that can be relied upon.”

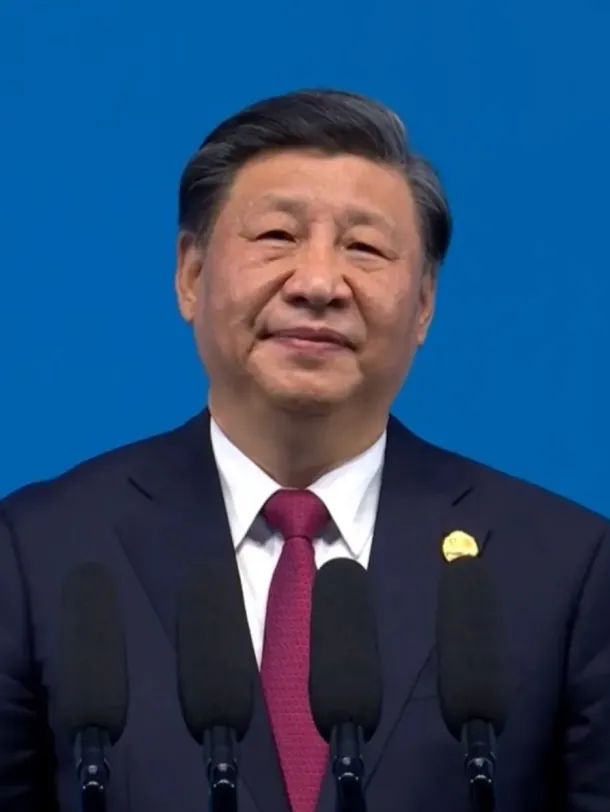

In China, where media are defined as tools to manufacture public trust in the Chinese Communist Party (CCP) and the state, such concerns are not even secondary. If there are journalists in China wringing their hands over the impact of AI on professional reporting or public trust, those conversations are happening privately, and quietly. The whole notion of the public interest as fundamental to journalism has been eclipsed under the leadership of Xi Jinping by the most robust application of press controls seen at any time in the reform era since the late 1970s. The possible exception is the three years immediately following the Tiananmen Massacre, which gave rise to the concept of “public opinion guidance” — a policy that to this day delivers on the firm conviction that the CCP must control news and public discourse to maintain the stability of the regime.

In one of his earliest actions as the country’s top leader, Xi Jinping in 2013 introduced an internal Party directive called “Document 9” that expressly opposed the idea of public interest journalism, which it panned as “the West’s idea of journalism.” “The ultimate goal of advocating the West’s view of the media is to hawk the principle of abstract and absolute freedom of press, oppose the Party’s leadership in the media, and gouge an opening through which to infiltrate our ideology,” the directive said.

Later that same year, as Xi cracked down aggressively on more independent voices on Chinese social media — the now mostly forgotten “Big Vs” — the Party introduced the concept of “transmitting positive energy to society” (传播社会正能量). If the role of the press in China had become more ambiguous through the 2000s, amid a precarious but determined movement of journalistic professionalism, it had now become clear again. Its place was to serve the larger goals of the Party and the nation, not of the public.

Today, it is virtually impossible to talk about the truth in China in any way other than that bandied about on the stage at the 2026 Internet Media Forum. “Authenticity” is fundamentally about positivity. And this means that discussions in China about the impact of AI in the media revolve almost exclusively around its production-related advantages.

A series of thematic sessions held alongside the main forum in Zhengzhou is a case in point. Discussions treated propaganda and corporate public relations as a seamless continuum, discussing how official and corporate messaging can “go viral” by adding a more human touch to narratives. The session brought together participants including Chang’an Avenue Insider (长安街知事), a public account under the capital’s state-run Beijing Daily, the new media department of the CCP’s People’s Daily, and Weibo’s executive editor-in-chief.

On Monday, a commentary at People’s Daily Online drove the point home, suggesting that AI could be effective in “adding warmth” to positive energy — in other words, that propaganda could be made to feel more human and relatable. The piece, published the day after the forum closed, described AI as having “deeply penetrated the full chain of content planning, newsgathering, editing and distribution” in mainstream media. Once again, there was not the merest frisson of concern. The integration of AI with content meant for public consumption, and of course public opinion guidance, was celebrated as a milestone.

During panel discussions at the forum, the People’s Daily Online commentary noted, keywords like “professionalism,” “staying grounded,” “seizing trends,” and “innovation” were on everyone’s tongues. Even the deeply human concept of “empathy” (同理心), which has entered into sharply different discussions of journalism in the West, made an appearance. All of these are qualities with the potential to ground journalism in the human experience, and form the connective tissue between journalism and the public it is meant to serve. In this context, however, they are production techniques and rhetorical devices to be achieved with helping hand of artificial intelligence.

It was no accident that posters and images of the Zhengzhou forum came with the usual heavy dose of robot imagery. AI robots took to the stage alongside dancing child performers, the pairing an oddly dissonant state message about the humanity of AI. China’s dream of an army of compliant Edgar Snows, all reporting empathetically on the elevated humanity of the Party, cannot be far off.