DeepSeek’s Democratic Deficit

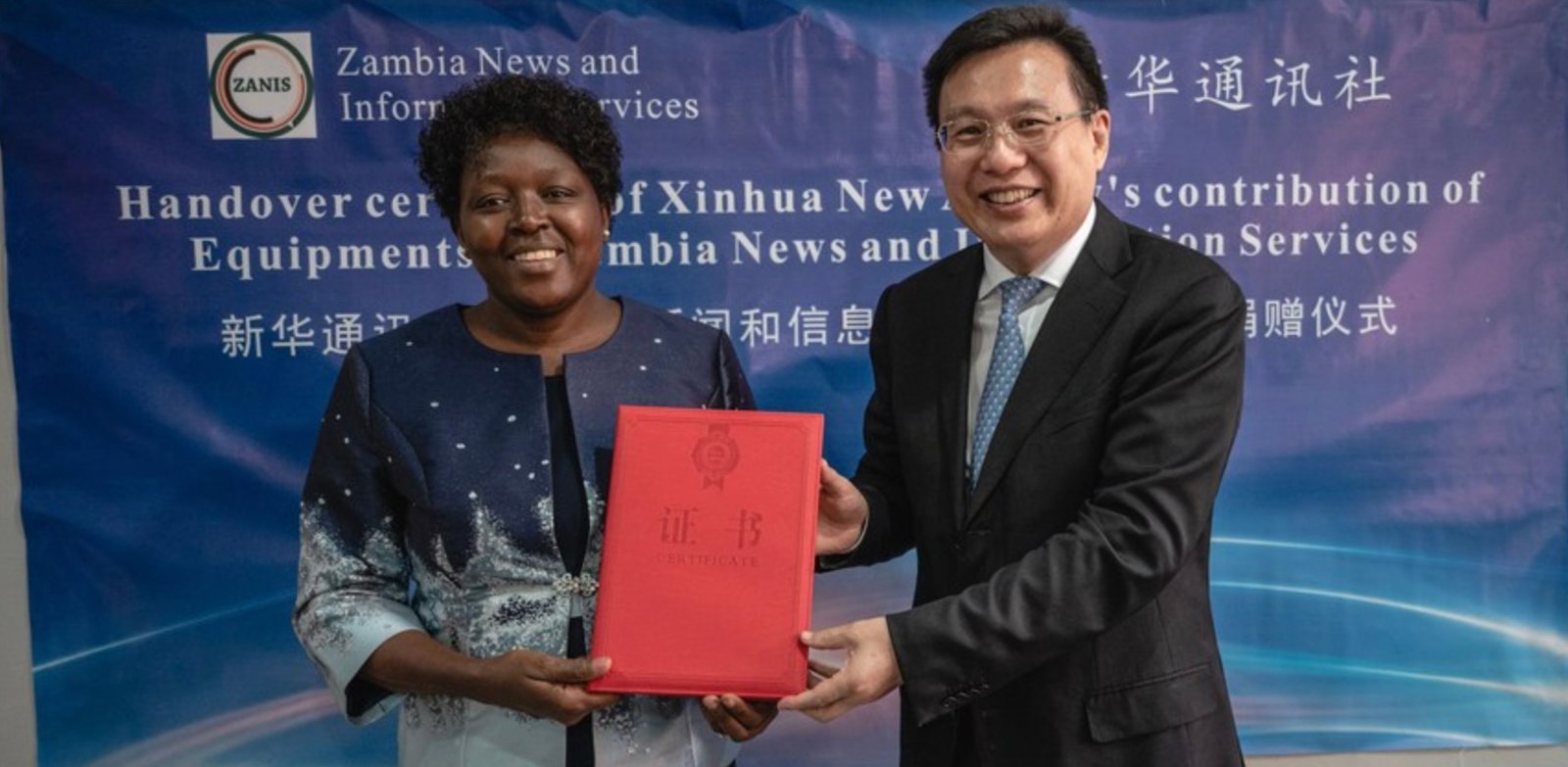

DeepSeek’s R1 AI model, released in February, has been rapidly adopted by governments and companies worldwide, including India’s government and American tech multinational Nvidia. Meanwhile, China’s government has promoted the model as democratizing AI access. “DeepSeek has accelerated the democratization of the latest AI advancements,” China’s embassy in Australia declared back in March this year.

Much of the hype around DeepSeek is premised on the idea that the model can be “de-censored” — training out of its embedded PRC biases. But our research at the China Media Project questions this premise, suggesting the model risks becoming a vehicle for the global spread of Chinese Communist Party narratives and authoritarian influence rather than genuine democratization of information. Our work suggests the model’s biases run deeper than simple censorship, and that even “uncensored” versions continue spreading CCP disinformation — for example claiming Taiwan has been “part of China since ancient times.”

CMP researcher Alex Colville writes: “Open-source can mean, broadly speaking, greater democratic decision of the benefits of AI. But if crucial aspects of the open-source AI shared across the world perpetuate the values of a closed society with narrow political agendas — what might that mean?”

Learn more about this important issue at the China Media Project.